Here’s a quick report having gone nearly 100% gradeless. I say nearly because at my school, the halfway point of the quarter (i.e., progress reports) requires a grade. So, as of right now there’s a course grade that shows up. This practice isn’t quite in line with a true ungrading approach that would have a grade only at the very end of the grading period. I’m nearly there, and have a feeling this is as far as I’ll go, too. But that’s not a problem. There’s already been a big difference in the most important areas, and I expect things to get even better.

Learning Evidence & Rationale

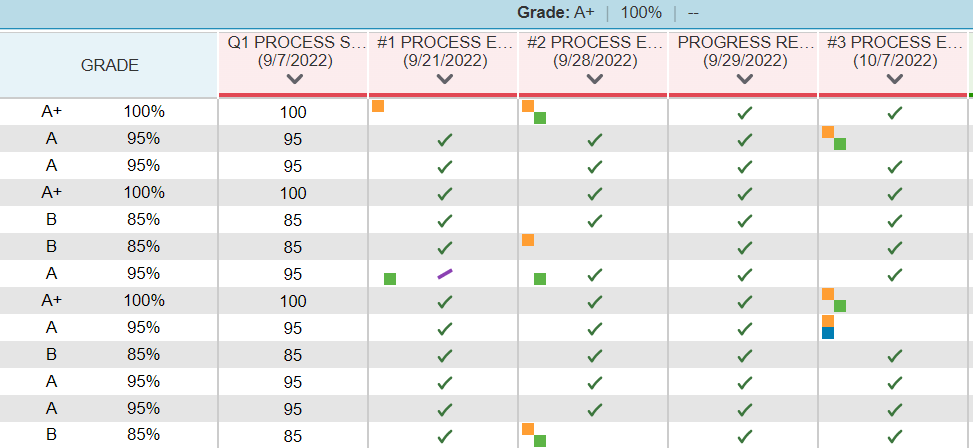

For years, I’d give a quick quiz of four T/F statements, put the results (0-4) in the gradebook, and I’d have a body of learning evidence to justify the course grade. That was pretty much my M.O. for a bit. In recent years, though, I got away from quizzes and moved towards students submitting notebook pictures of their weekly work. These were graded on a 3-point scale based on completion (i.e., 55, 75, 100). That was then. This year, students now choose what to put in their portfolio for learning evidence. Oh, and I’ve stopped grading all assignments, entirely. No numbers. No completion rubrics. None of that. Instead of grades, I use gradebook codes, like a check mark to show collected, or “m” to show something is missing and students are expected to turn it in, as well as others showing absenteeism, etc.

As you can see above, the learning evidence is all there. That course grade is determined by students evaluating their evidence against a single holistic rubric, but there are no grades for individual assignments. I’m 6 weeks into doing this, and can already see how silly it was attaching a grade to ANY of that stuff. In short, even the 3-point-scale completion rubric caused me to try and evaluate the difference between the levels. Yet reality is that there wasn’t much clearly defined. Is a picture of just a few Latin words on a notebook page considered “incomplete?” Is that a grade of 55, or 75? Does a whole page get the 100? Where’s the cutoff? Even writing very specific criteria into each level could never really account for all instances, either. There was still a lot of guessing during the evaluation process while reviewing student work and slapping a grade on it. And that guessing required a lot of brainpower. Too much, in fact.

So, instead of me doing all that, students now add to their portfolio throughout the quarter, self-assess and self-grade, then provide a rationale supporting what they chose. What they’ve had to say has been enlightening. For example, I thought a particular student had missed a LOT of classes, but it turns out it was only two. I just misremembered. When I checked their rationale, it addressed the absences as well as how their learning evidence supported the grade they chose. In the past, I’d maybe look at a list of quick quiz and notebook scores, like 75, 75, 100, 85, and have to determine what best represented student learning, most often looking for patterns and using the trend/mode. Now, not only are the numbers completely out of the equation (jajaja, get it?), but so is me having to try and evaluate the difference between what a 75 notebook looks like vs. a 100. Now, it’s all in the student’s rationale, which has been a sentence or two on a Google Form (after they add to their portfolio). The focus is on the actual content and expectations, not grades.

Feedback

Also, I’ve already given more specific and useful feedback than I probably have in all ten years of teaching! How? First and foremost, I surely do not praise (re: Punished by Rewards, Kohn, 1993). What I do is take more of an “SE2R” approach from Mark Barnes:

Summarize (e.g., “You wrote a one-page summary of the topic.”)

Explain (e.g., “You defined the similarities between X and Y. I didn’t see any statements to support your claim, though. Do you see them?”)

Redirect (e.g., “Add supporting statements and resubmit.”)

Resubmit (e.g., “When you finish, send me an email.”)

In practice, I’ve been doing a quick copy/paste of the student’s rationale (i.e., “You said…”), then a reply that their learning evidence doesn’t support their statement, and what to do about it (e.g., “add something to your portfolio that shows…”). Even a single sentence from a student shows whether they’re understanding the connection between what they submitted an what they think it represents. This connection provides all the basis we need for justifying the grade a student thought best represents their learning. The best part?

I’m just here to guide, not to “give out” any grades.

Grades aren’t even “earned.” Students are arriving at them logically, taking several moments throughout the year to reflect on their learning. This is student ownership. What does it look like once students submit the rationale? As with most self-assessed grades, I start with the highest and lowest first, then work inwards. Unless students are way off, I let grades of 100, 95, and 55 stand as they are. The 100 is symbolic since 93 and up counts as 4.0 towards GPA, and the 55s are usually real easy to spot. Working inward, if a student offers a reasonable explanation for why they chose a 75 or 85, I go with it. Those students typically know they’re not meeting expectations as well as they could, and I agree. It’s mostly the the 65s that I might encourage being changed to 75.

1. Do you enter those “Process” place markers as test, daily, or non-credit baring?

2. How often do the students do a self-evaluation?

3. What is the rubric they use to self-evaluate?

4. What do parents and administrators think of this system?

5. Why do you prefer for students to submit their self-evaluations online/digitally?

6. Do you ultimately make executive decisions about the grade if it seems like the student’s self-evaluation is off base?

7. You said you give feedback more than ever. Do you give feedback on their self-evaluations specifically, or on their daily activities, or both?

Thanks if you have time to answer these!

1) I don’t really know what that means, but they are weekly-or-so assignments in a “Process Learning Evidence” category that’s floating in the ether. It doesn’t calculate anything (but assignments are set up to be “Collected Only” with no points attached so they wouldn’t calculate anyway). The course grade is 100% standards.

2) Once per quarter at the end, and this first halfway progress report moment. So, 5 total. After quarter 1 ends I’ll just carry over the grade which will be self-assessed at the end.

3) See this post (https://magisterp.com/2022/08/23/2022-23-grading-process-growth/) for rubrics, or the Materials section.

4) Radio silence from parents, etc., but that’s mostly because these stakeholders basically just want to see records and progress. They rarely ask about how things are determined. Admin is on board. I’m leading grading efforts at school.

5) Easier to manage and access, absent students can still upload things to their portfolio whenever, and nothing for me to collect, etc.

6) Yes, but like the research shows, this is rare.

7) Both. Depends on what the student says about what they added, and/or how it shows meeting expectations.

I agree wholeheartedly that attaching numbers to student work is not only absurd but also creates a lot of undue stress for teachers. Ungrading is by far one of the most groundbreaking practices I have ever implemented and you could not pay me to go back to traditional evaluation.