My one-standard-self-assessed grading system of receiving input (re: Input Expectations rubric) has been working out just fine for several years now. “Fine” is…well…fine…but we as educators should be open to refining practices whenever we get new data, especially whenever “fine” has the opportunity to become something awesome. This year I was able to do something better, getting ever so close to that awesome. If what I’ve been doing could be considered 85% of the way towards equitable, time-saving grading that shifts focus to learning, I’m now at probably 90%.

These updates are the result of some research I’ve been doing using primary sources from Grading For Equity (Feldman, 2018), Fair Isn’t Always Equal (Wormeli, 2018), Assessment 3.0 (Barnes, 2015), Hacking Assessment (Sackstein, 2015), and Ungrading (Blum & Kohn, 2020), along with 20 or so additional research reports on related topics. Updates included introducing new standards one-by-one, and their values changed throughout the year. The system also moved from 100% self-graded to 100% teacher-graded. I’m keeping some of these updates for next year, but more on that later on. Let’s take a look at those standards, first…

Process

Process refers to the things students *must* do to acquire language. It’s basically what the Input Expectations rubric was all along years before. Rather than a set of bias-ridden controlling rules that have circulated the language teaching profession for some time, though (e.g., “eyes up front, nothing in laps/on desk, intent to understand,” etc.), there is no dispute that students need input, and there are only three modes of doing so: reading, viewing, and listening. That’s it, and there’s no way to distill it further. Since a focus on providing input requires plenty of time and energy, there’s not much convincing reason to do or grade much else. Therefore, my grading system aligns with the instructional design 1:1.

The processes found in the older Input Expectations rubric have been 100% of my students’ grade for years. Students self-assess how well they’ve been receiving input about four times per year, and that’s it. How do they receive input? Well, I’ve been working under the Look, Listen, Ask framework, but have now separated out the latter into a workflow of Respond/Show/Ask. If students Respond (target language takes priority, but English is fine), we’re good to go. If they can’t, students Show their understanding (e.g., gestures, expressions, etc.). If students can’t do either, though, then it’s time to Ask. This update has the benefit of getting more engagement from students without requiring some kind of “choral response” rule. Also, the students who can respond—but choose not to—start to realize it’s easier to do so rather than having me check their comprehension (because I didn’t get any data and no data is bad).

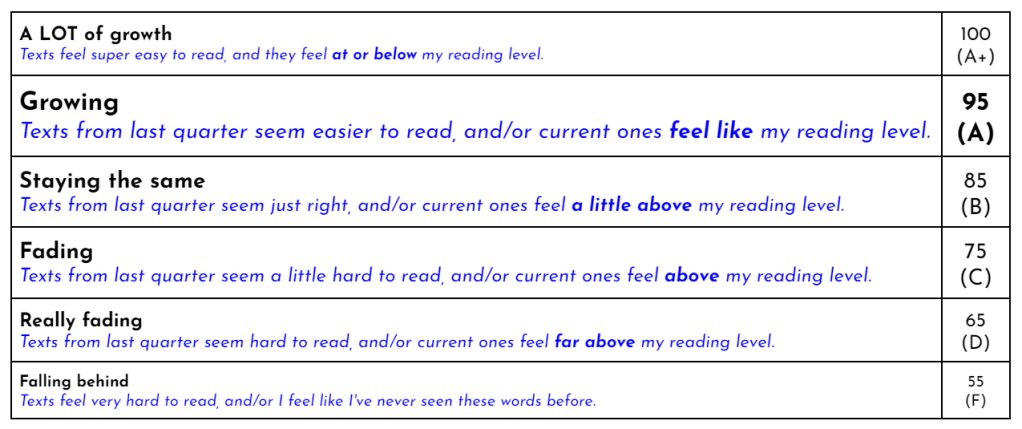

The image above represents the Process of receiving input (and ensuring it’s comprehensible as often as possible). This standard was 100% of Quarter 1, and self-graded according to a simplified rubric (shown below) that replaces the Input Expectations one I was using for years. The criteria includes a combination of amount of input (I), as well as the degree to which that input is comprehensible (C). Process was the main standard, and present for all grading terms except the final Quarter 4. N.B. once a student gets a 93, they have a 4.0 GPA. The top of the scale is there for the academic elite perfectionists who just couldn’t possibly be satisfied with a 93. It’s really just psychological.

Growth

Also self-graded, students compared their own experience reading an older text from Quarter 1 to a text from Quarter 2, reporting how it felt as a result of the Look & Listen and Respond/Show/Ask processes. Growth took into account ease of reading and reading level (see rubric below). Text comparisons were done by setting up a Google Form with an older text dropped in, plus something newer. That’s it. This standard made its appearance in Quarter 2 only, with equal weight given to both it and Process (50% each), both self-graded (100%).

Proficiency

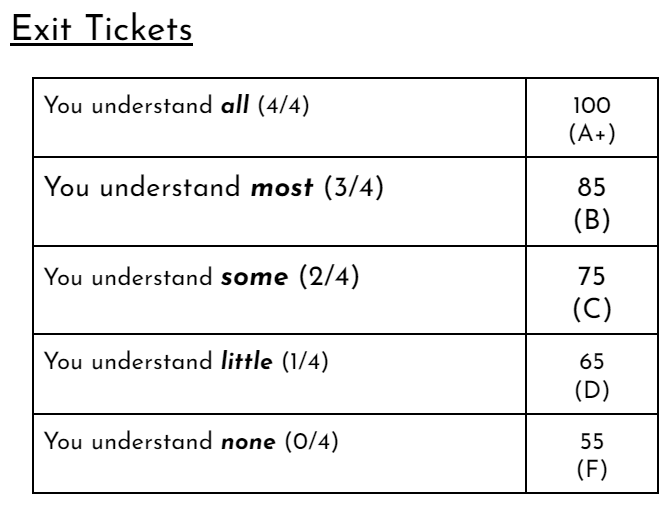

Yup, I came around full circle to this, but my time away from it was well-spent. I brought back quick quizzes, though rebranded as Exit Tickets. That’s actually a much better word for them since they’re not predetermined, reflecting only what happened in class.

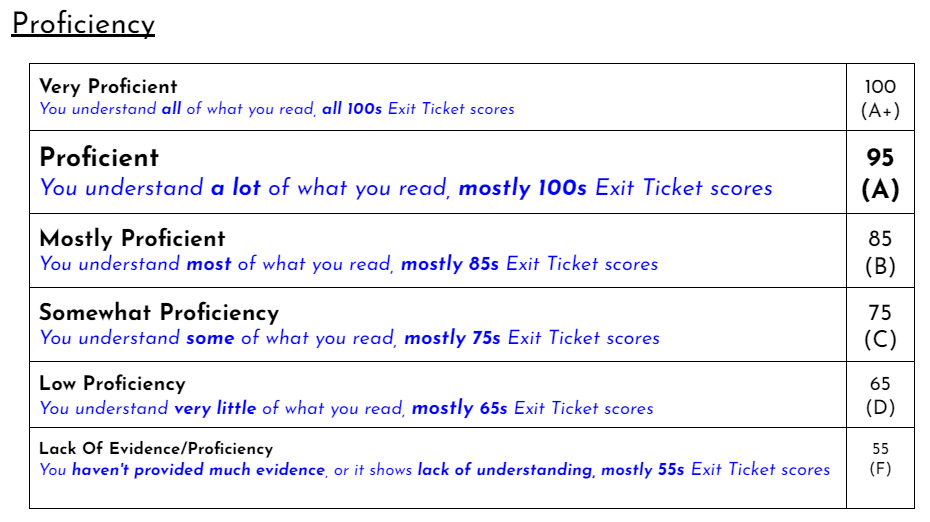

Although we scored these in class together according to the rubric above, the ultimate determination of a student’s Proficiency grade was up to me based on the trend, or mode, of Exit Ticket scores (see rubric below). If you’re wondering how that went, it was easy. Of the 6-8 Exit Ticket scores, one number clearly appeared the majority of the time for each student: that was the grade I put in the Proficiency standard. This standard replaced Growth in Quarter 3, so there was equal weight of Process & Proficiency, and 50% self-graded, 50% teacher-graded.

Quarter 4 shifted all the focus to Proficiency (100%), which was teacher-graded (100%), and reflected the culmination of the year. The idea isn’t as much some kind of…”correction”…to the kinds of grades students give themselves that I’ve heard teachers concerned about when it comes to self-assessing. The fears just don’t play out in reality, and research confirms this, too. Besides, I double check, edit, and inform students who rarely over- or underassess themselves throughout the year. They’re usually identical. The messaging was more like the following: if you maintain the Process of receiving input throughout the year, your Growth will be more obvious, and your Proficiency understanding Latin will increase. The end of the year is a good time to show this, so I had the final quarter focus only on that. Now, if you’d like to characterize the whole system as a hippy dippy self-graded approach to start of the year that leads to something more objective and teacher-graded at the end, fine, I won’t stop you if it feels better.

Gradebook Evidence

Whereas the first quarter evidence was mostly pics of notebooks and other things done in class showing Process of receiving input, there were fewer of those in Quarter 2 once Growth was introduced with its text comparisons. Quarter 3 introduced Exit Tickets, which became nearly all the evidence in Quarter 4 along with other digital comprehension assessments. See? Grading shouldn’t be complicated!

Summary:

Q1 = Process (100% self-graded)

Q2 = Process & Growth (100% self-graded)

Q3 = Process & Proficiency (50% self-graded, 50% teacher-graded)

Q4 = Proficiency (100% teacher-graded)

What about next year? TLDR; I’ll be moving back to an entirely self-assessed “ungrading” system, and only Process & Growth, every quarter. One key element will be students selecting their own learning evidence to add to their digital portfolio (a Google Doc). More on that late this summer when I set things up just before school starts. For now, I’m gearing up for another legit summer VACATION doing approximately 0% work aside from presenting these ideas July 5th during Conference in the Cloud 2022. Hope to see you there!